Topic – Intuitive explanation on Generative Adversarial Networks Python | 2022

What are Generative Adversarial Networks(GANs)?

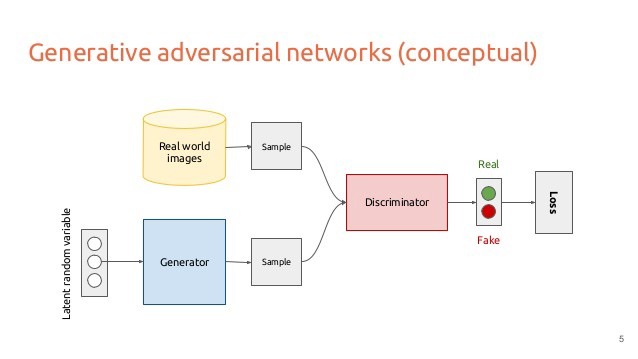

Generative Adversarial Networks are a kind of neural network that has 2 separate deep neural networks competing with each other: the generator and the discriminator.

Wondering how your favorite FaceApp works?

GANs can create images that look like photographs of human faces, even though the faces don’t belong to any real person. In your FaceApp, GANs transform your images into images of people who do not even exist!

Hence, the goal of GANs is to generate data points that are magically similar to some data points in the training set.

Currently, people use GAN to generate various things. It can generate realistic images, 3D-models, videos, and a lot more.

Now, you must be interested to see How do generative adversarial networks python work?

Let’s dive deep and explore Generative Adversarial Networks in depth!

There is a famous saying about GANs, which tells-

The generator tries to fool the discriminator, and the discriminator tries to keep from being fooled.

So, we will see what this famous quote mean, and trust me, once you get hold of this quote, you know everything about GANs!

What is a Generator?

The Generator is a kind of neural-network model can generate new data instances, specifically images or texts, as per your data requirement.

Statistically, generators capture the joint probability p(X, Y), or just p(X) if there are no labels, where X,Y are a set of data instances, and their labels, respectively.

It’s essential for the machine to be intelligent. If it can generate, it would understand. Hence, Generators are very important.

Look at the above picture. The generator has generated numbers that even humans cannot distinguish from the real images!

AI CAN FOOL YOU! 😛

What is a Discriminator?

The Discriminator is again a deep neural network model that discriminate between different kinds of data instances, generated by our generator.

A discriminative model ignores whether a given instance is likely, and just tells you how likely a label is to apply to the instance.

In terms of Statistics and Probability theories, Discriminative models capture the conditional probability p(Y | X), or probability of Y, given X!

Now let us sum up these ideas and see what the quote wants to tell us.

- The generator learns to generate data.

- The discriminator learns to distinguish the generator’s fake data from real data.

- The discriminator penalizes the generator for producing results that are not likely.

The generator will try to generate fake images that fool the discriminator.

Discriminator thinks that they’re real.

It tries to distinguish between a real and a generated image as good as it could when an image is fed.

YAY! Now the quote makes sense!

How do you train a generative adversarial network?

To train a GAN we take both the generator and the discriminator to make them stronger together.

We train them together because, if one network is too strong, the other will not be able to keep up. So, you will end up with two dumb networks.

In a nutshell, when training begins, the generator produces fake data, and the discriminator quickly learns to tell that it’s fake.

Finally, if generator training is smart enough, the discriminator fails at telling the difference between real and fake.

It starts to classify fake data as real, and its accuracy decreases.

Remember, we are in support of our generator team. The discriminator has to lose the game!

Since the generator and the discriminator are neural networks, through backpropagation, the discriminator tells the generator to update its weights.

Training the generator

The generator must try to make the discriminator outputs 1 (real) when given its generated image.

Now, this is an interesting part.

The generator must ask for suggestions from the discriminator!

Technically, you do that by back-propagating the gradients of the discriminator’s output with respect to the generator.

That way, you will have gradients tensor that have the same shape as the image.

Now we will back-propagate these image gradients further into all the weights that made up the generator.

Therefore, the generator learns how to generate this new image.

Training the discriminator

The discriminator in a GAN is simply a classifier.

It tries to distinguish real data from the data created by the generator.

The training data comes from two sources:

- Real data instances, such as real pictures of people.

- Fake data instances created by the generator.

During training, the discriminator ignores the generator loss and just uses the discriminator loss.

Note: During discriminator training the generator stays quiet.

Great!

Wrapping It Up!

The above processes continue till we reach an equilibrium.

After they are clever, the generator will generate realistic images. And the discriminator cannot distinguish these cleverly generated images anymore.

Bravo! We won the match!

But remember, both networks aren’t fighting each other, they have to work together to achieve their joint goal.

They both get stronger together and hopefully reach an equilibrium.

Awesome!

If you want to run an interactive version of GANs, I recommend you try this tutorial with TensorFlow notebook!

Conclusion Generative Adversarial Networks Python

Hope you had fun discovering in-depth about GANs! It is one of the most clever and popular machine learning algorithms discovered so far.

Further, you must try out the TF GAN library to make a GAN. You will definitely enjoy!

Contact us for more such posts!